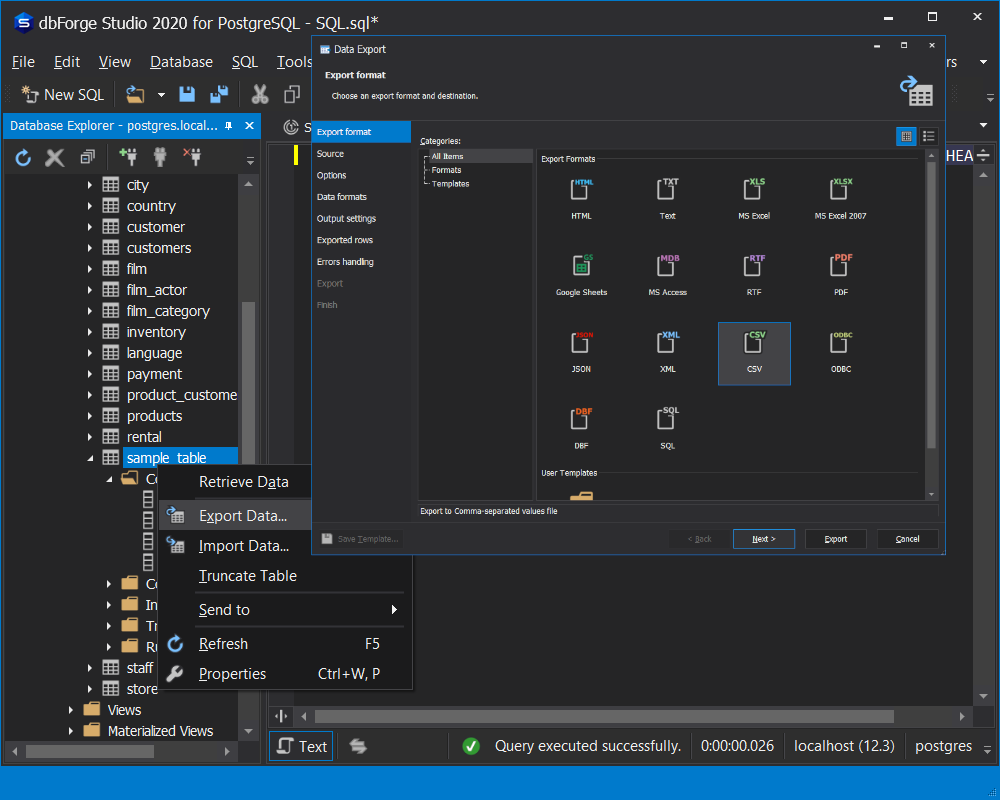

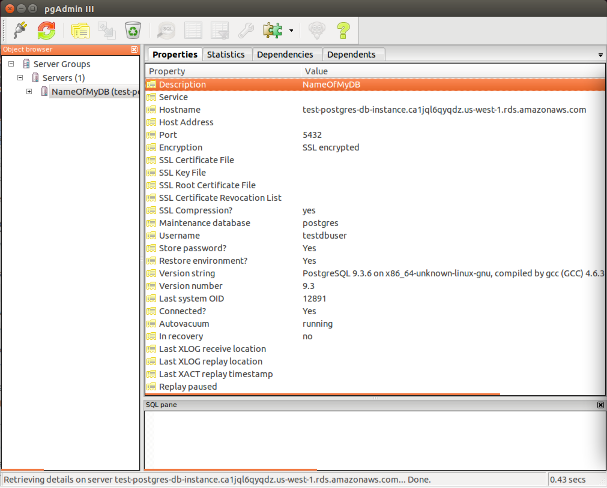

Also if you need information how to save data to CSV file using python, you can refer following. For that, you can copy data to a temporary table and insert all data from the temporary table to the destination table by ignoring constraints. Export data from Aurora PostgreSQL to Amazon S3 To export your data, complete the following steps: Connect to the cluster as the primary user, postgres in our case.By default, the primary user has permission to export and import data from Amazon S3. When you are executing if you get a constraint fails the query will stop. įollowing is the COPY command syntax COPY table_name ) ]įROM ', '') COPY TO can also copy the results of a SELECT query. This statement is a compliment of the LOAD DATA command, which is used to. COPY TO copies the contents of a table to a file, while COPY FROM copies data from a file to a table (appending the data to whatever is in the table already). To export the table into a CSV file, we will use the SELECT INTO.OUTFILE statement. According to the official documentationĬOPY moves data between PostgreSQL tables and standard file-system files.

For this scenario, PostgreSQL AWS extensions provide COPY command for S3 files to copy files from S3 to PostgreSQL RDS directly.įirst we will see what’s the COPY command does. User could reference Azure document to narrow down the result set. When you have millions of entries of data to be inserted into PostgreSQL as a backup or for any reason, its often hard to iterate and insert into a table. Once you have the query results, export to a CSV file by clicking the Export-> CSV (all columns): (Please note that Azure Log Analytics Workspace has a limit which only show the first 30,000 results. mysql Install awscli on your EC2 box (it might have been installed by default) Configure your awscli with credentials Use aws s3 sync or aws s3 cp commmands.

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed